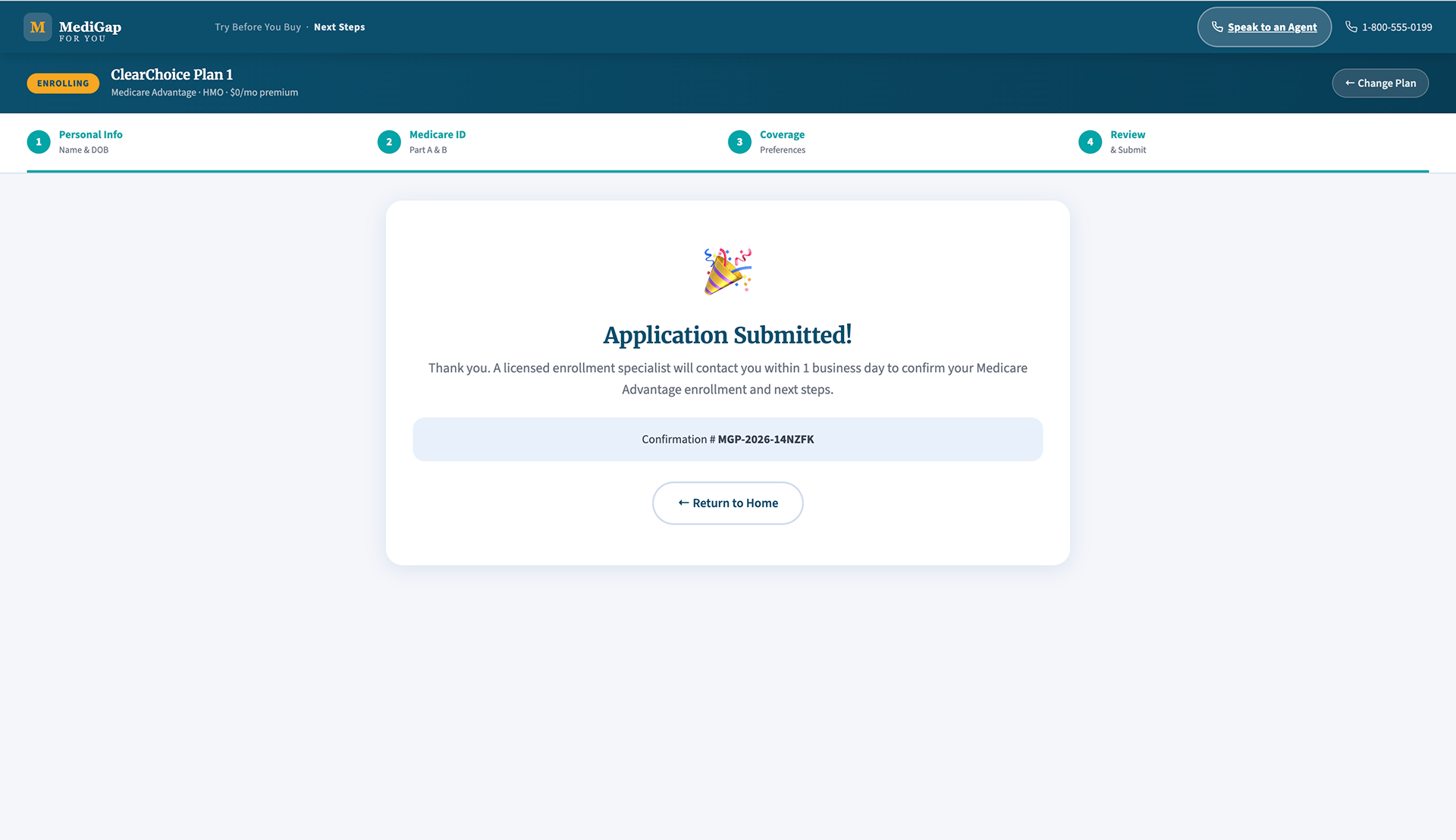

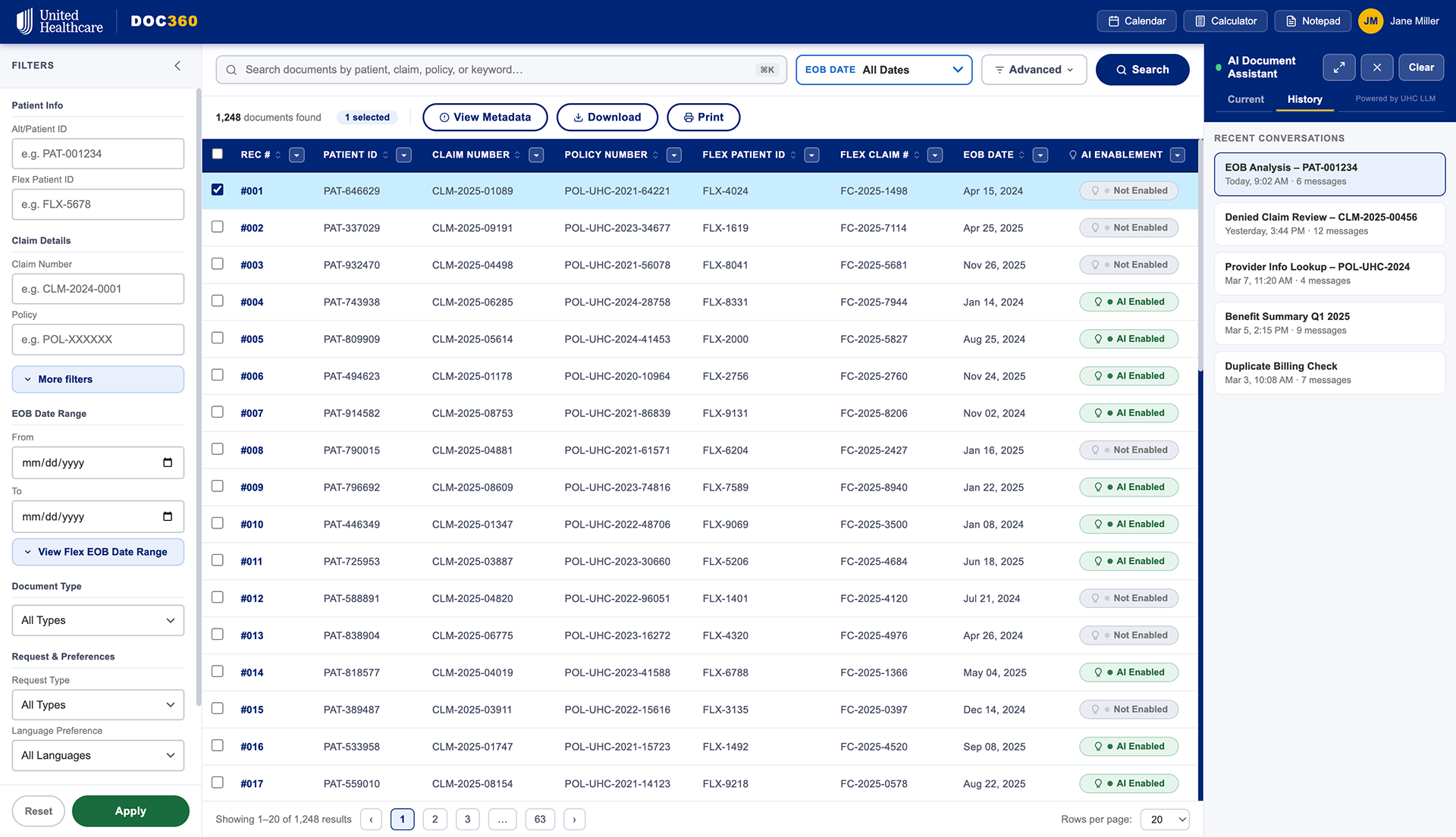

The main screen of Doc360. Upon hovering an "AI Enabled" document, they will see a brief summary with additional actions.

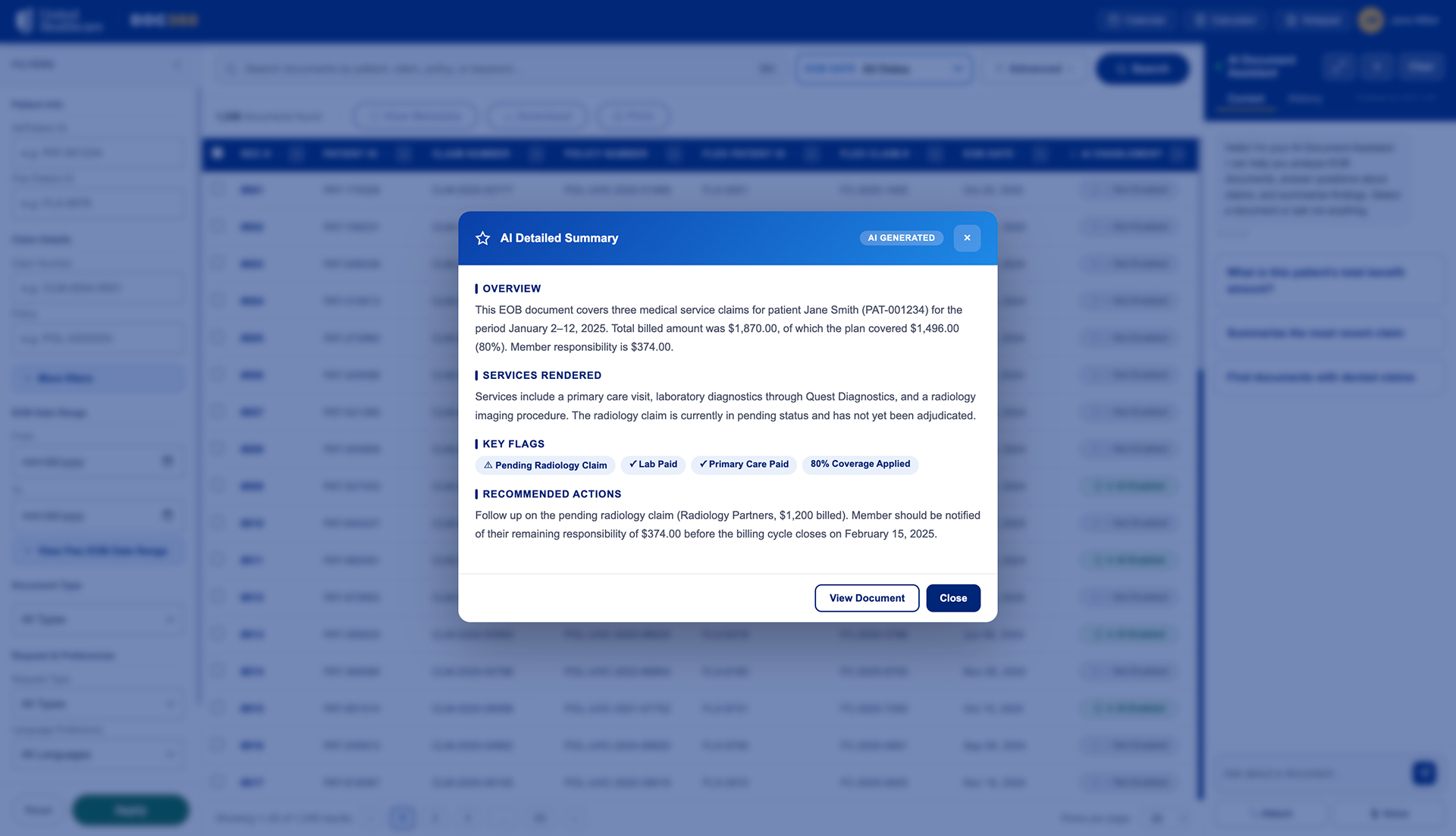

Upon viewing "View Brief Summary", the user can view a quick synopsis of the document.

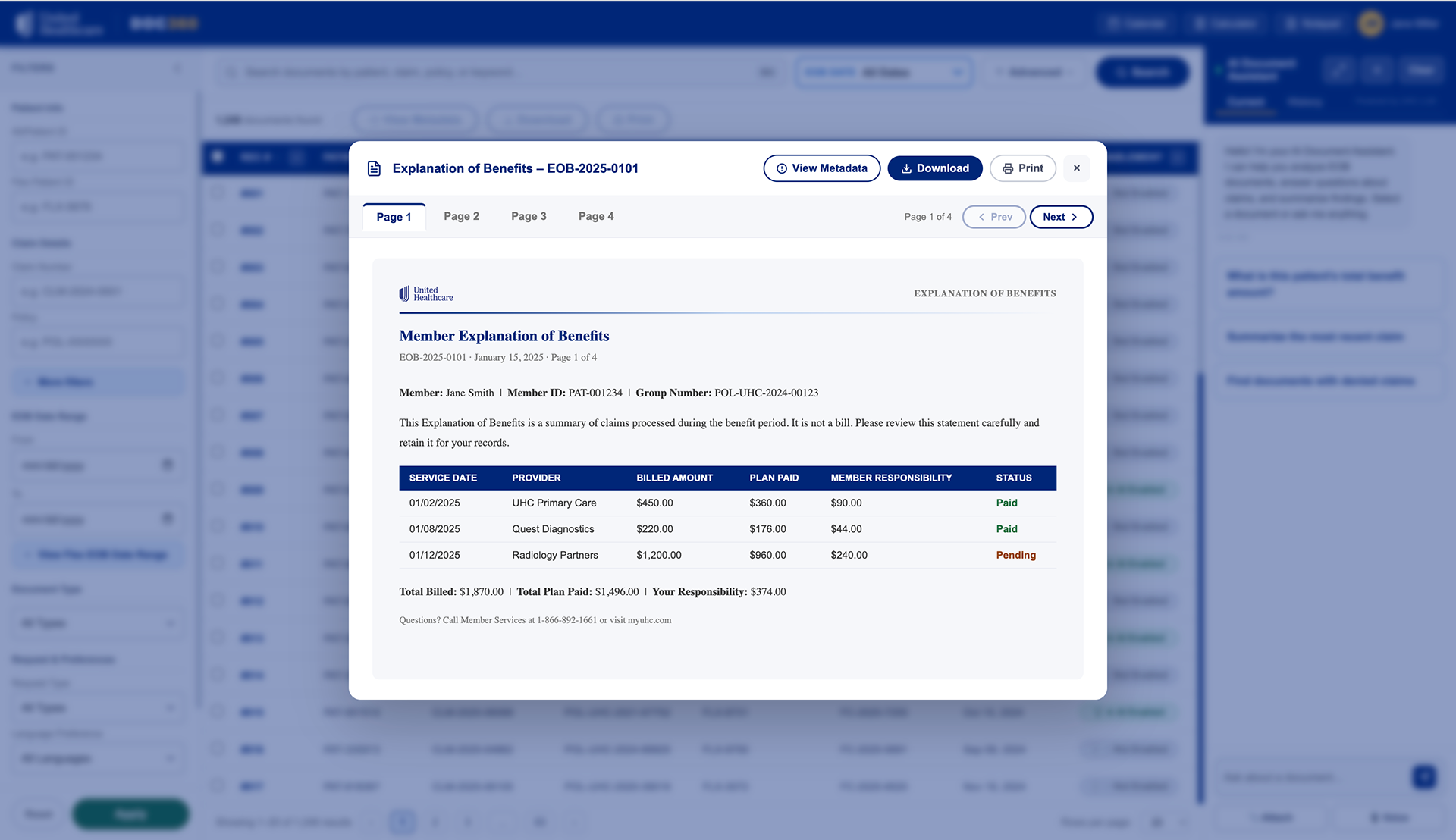

If the user wishes to view it in full, they have the option to do so along with other actions such as download, print, view metadata, and exit.

The AI Document Assistant has preloaded questions and can assist the user as needed.

In addition, the user history is kept for convenience.

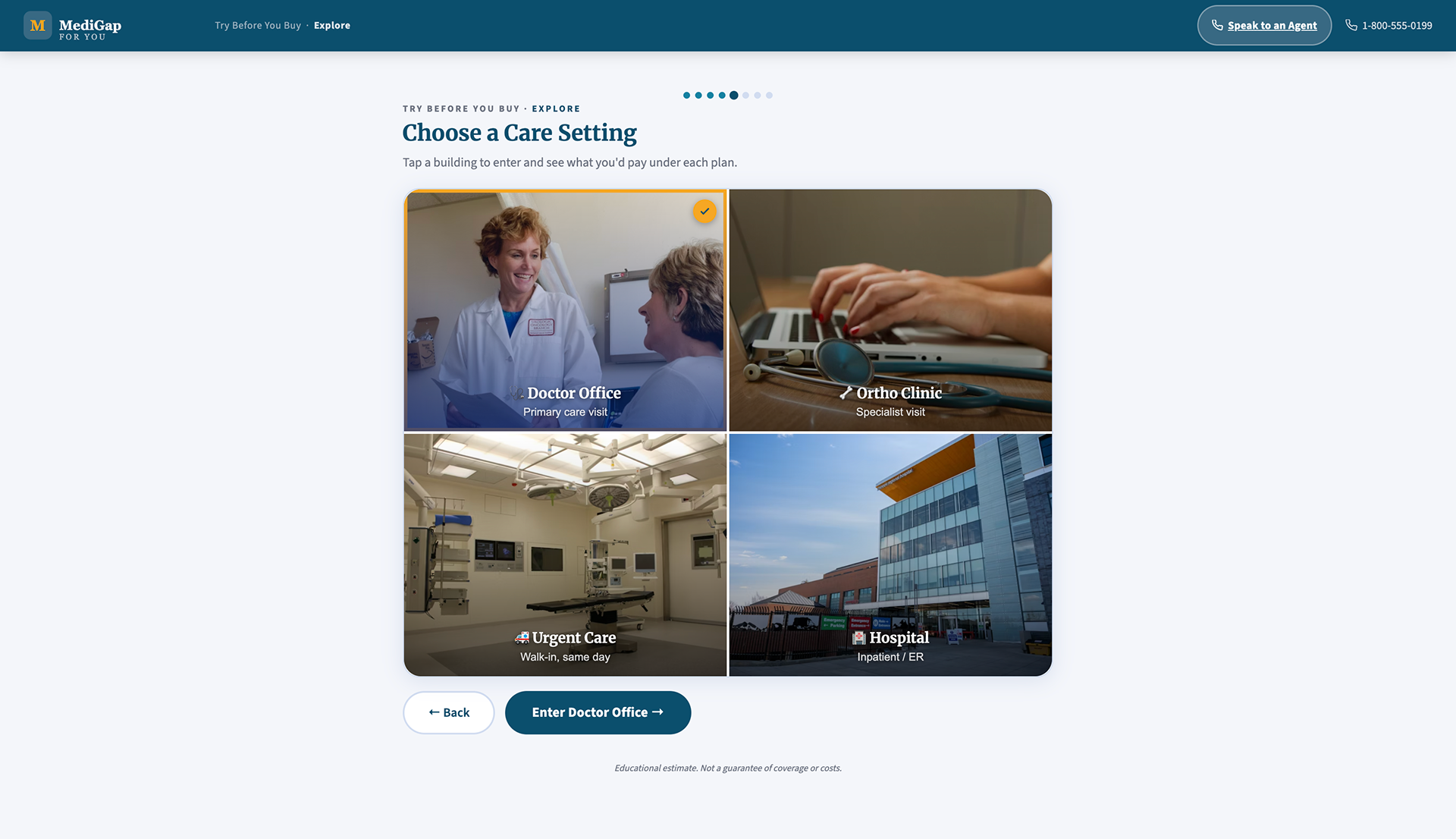

The main landing screen. Here, the user can choose a 2-minute tour which will aid them in deciding their MediGap plans, or compare plans immediately.

During the walkthrough, the user will go through 4 questions, and they can respond by clicking, writing their own answer, or voice to text.

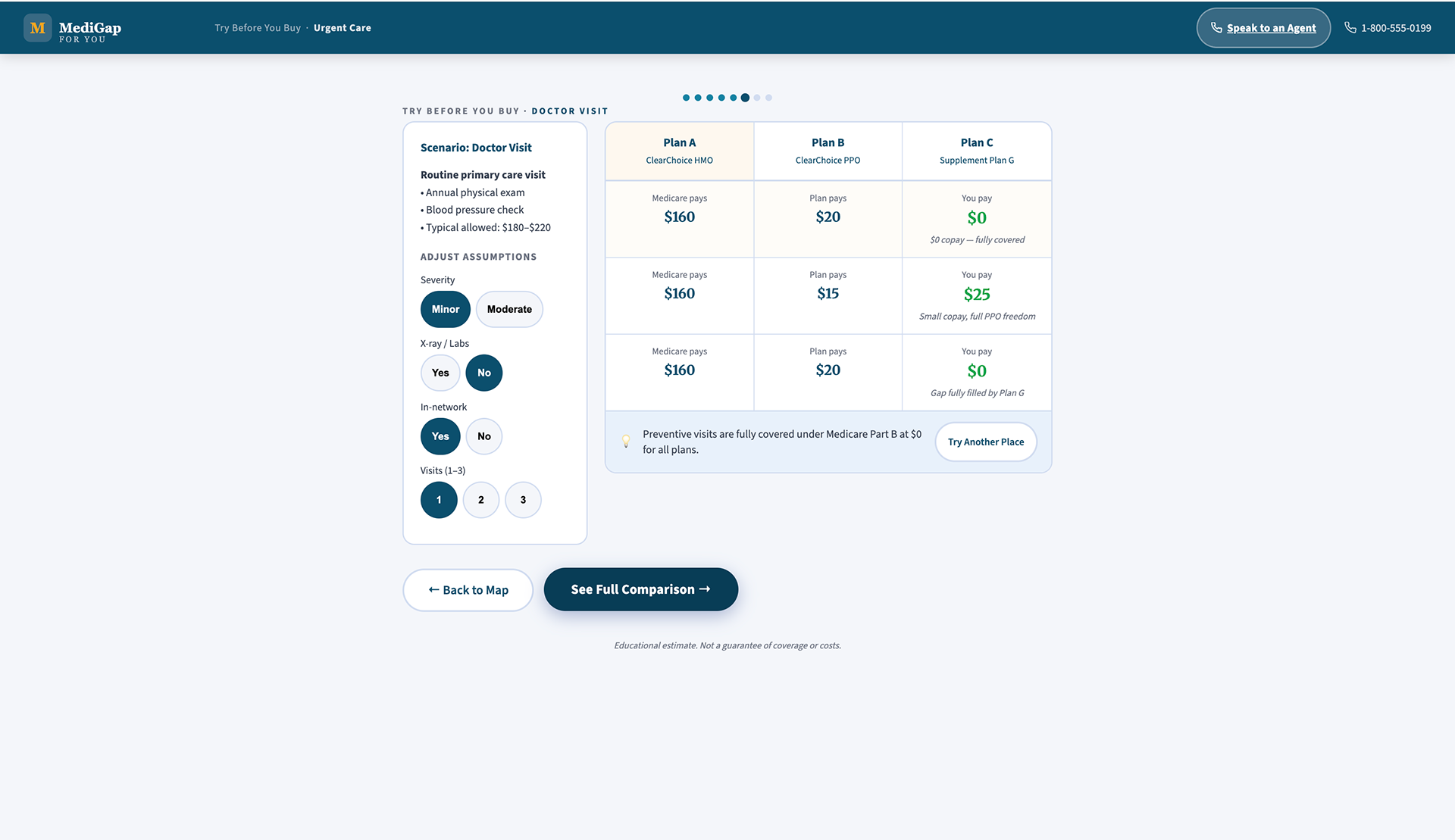

Here, the user can see their top 3 plans based upon what they responded to. They can see more available plans in their area, customize assumptions, or watch a walkthrough of the plan - this is where our user will "Try before they buy".

Upon clicking "Watch Walkthrough" the user will get an immersive walkthrough of the plan, allowing them to "try before they buy".

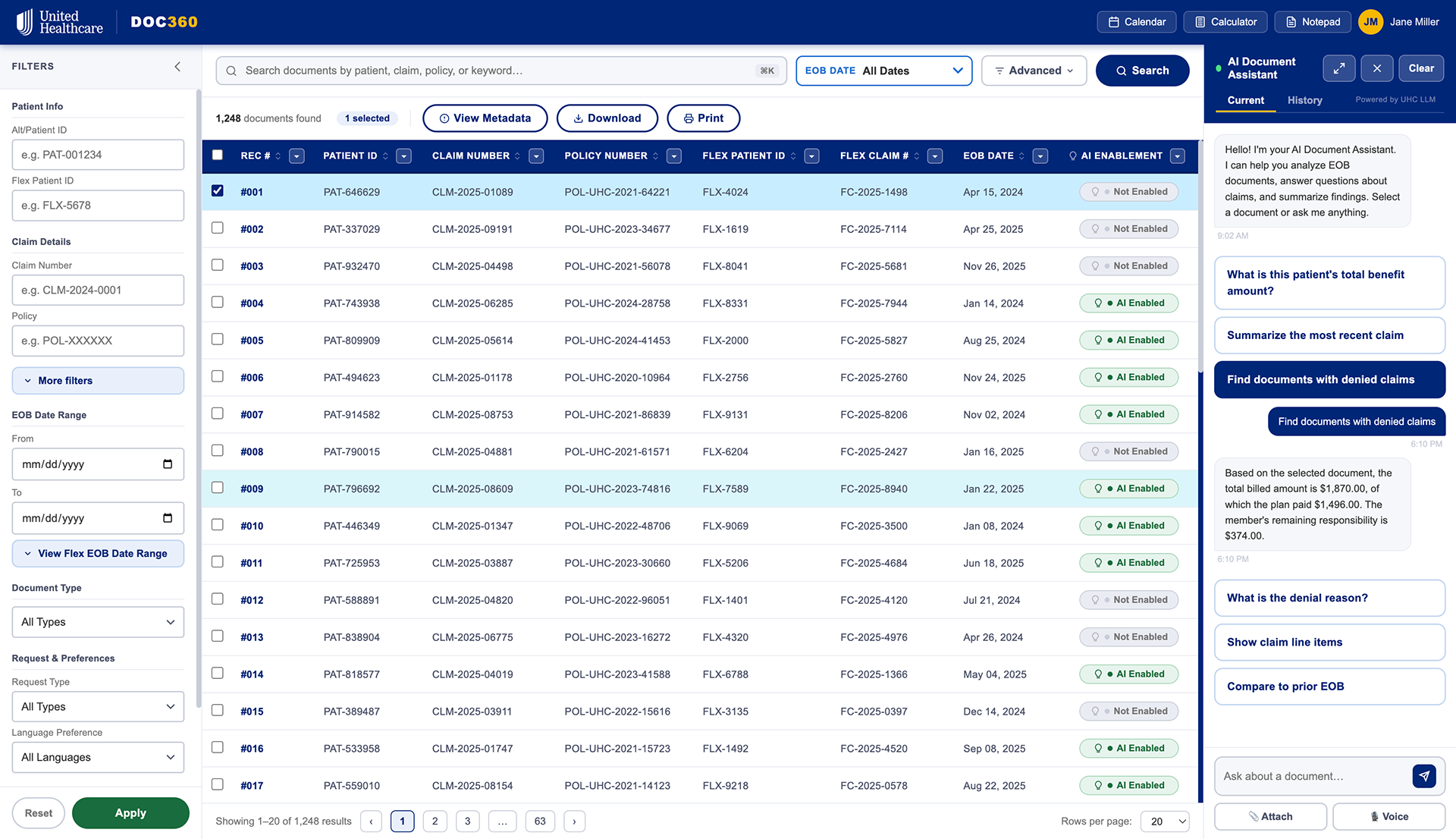

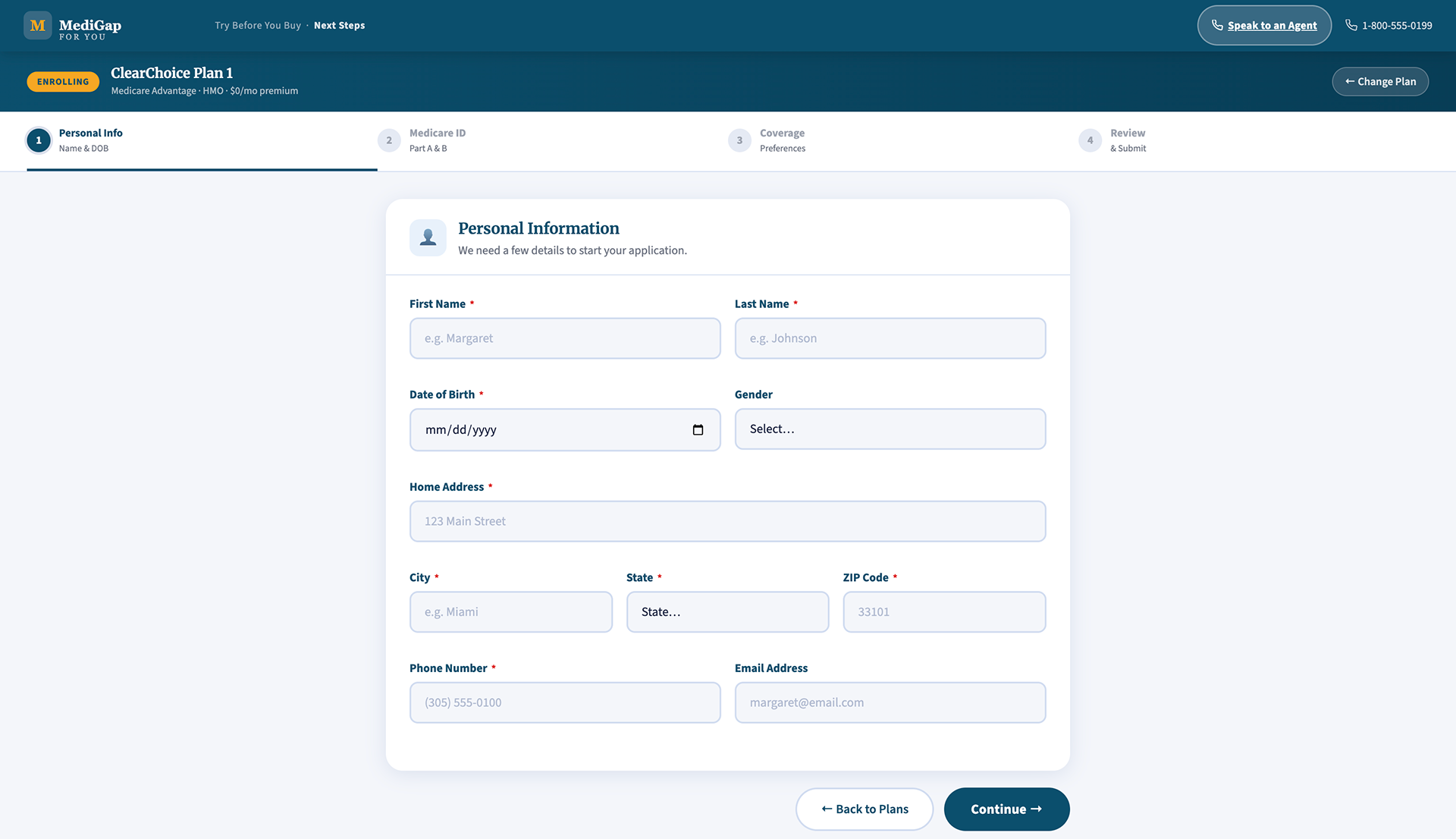

Upon choosing to fill out their application, they will go through a thorough process.